A few days ago we noticed that a WooCommerce site hosted on our platform is using much more CPU’s processing capacity compared to the number of visitors. Although the site had been using all of the top 3 factors for speed performance or reducing CPU usage, in this case: Litespeed cache, APCu object cache and Google Cloud CDN, still, still, we noticed that this site with around 500 visitors a day has higher CPU usage than a site with 40,000 visitors a day.

What is the problem with WooCommerce and “add-to-cart” links?

The problem is not in the WooCommerce or “add-to-cart” format of the links; in fact, the problem is that “add-to-cart” pages are not cacheable. Closte uses Litespeed WordPress cache that is entirely compatible with WooCommerce and features an integrated monitoring system that gives you statistical analyses for three parameters: Cache-Hit, Cache-Miss, and Uncacheable. Let’s check out these parameters in brief.

- Cache-Hit – the number of requests that have received a cached response. These requests do not utilize CPU capacity or memory, and you should always try to keep this number high.

- Cache-Miss – the number of requests that were not cached at the time, but a cache is generated for next requests.

- Uncacheable – This type of pages cannot be cached, very often on WooCommerce site, it is when a user is logged, when a user adds products in a shopping cart or sees the products in it.

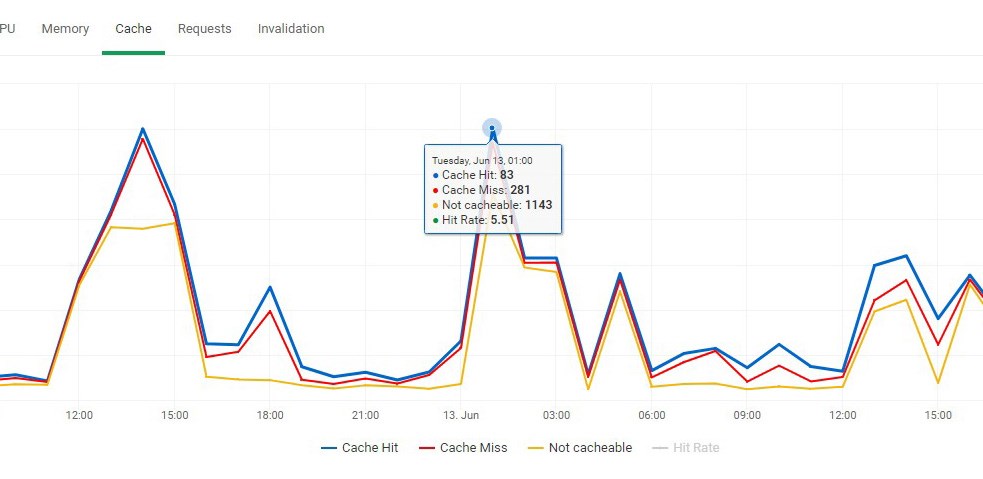

In most cases, Cache-Hit needs to be higher than Cache-Miss or Uncacheable; otherwise, your site will use a significant amount of CPU capacity and memory. Look at the picture below where you can see that at a given hour, the site achieved only 5.5% cache hit rate.

These 1143 uncacheable requests are much more than Cache-Hit / Miss and always use the CPU capacity, memory, and in this case (you’ll see below) and additional bandwidth. Surprising is the fact that this WooCommerce site has so many uncacheable requests. Does that mean that all of our visitors are logged in, visit only “add-to-cart”, “cart” pages, etc.? Seems a little odd, doesn’t it?

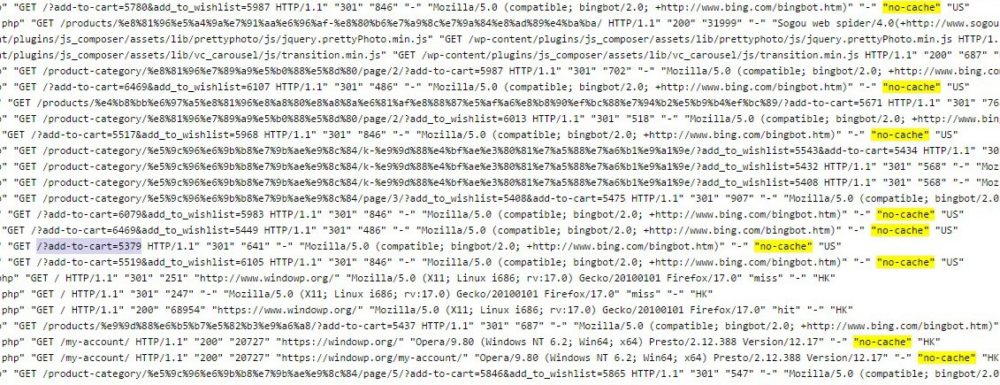

Next thing we checked was the access logs, to analyze which URLs are requested without being cached.

When we were checking the access logs, we noticed right away that bots like Googlebot, Bingbot, and others are indexing “add-to-cart” links. These links cannot be cached (they partly can, but we will speak about that in another post) and there is no need to be indexed; otherwise, they will slow down your WooCommerce site using processing power for unnecessary things.

How to prevent robots crawling “add-to-cart” links?

We must point out that there are WooCommerce themes that perform “add-to-cart” function via Javascript and the bots are in fact unfamiliar with these links, but there are WooCommerce themes that add “add-to-cart” links directly in HTML file. This can be easily checked in the HTML source code (Chrome: right-click -> View Page Source).

However, whether your “add-to-cart” links are performed via Javascript or added directly in HTML file, it is recommended to disable the option for indexing them. All you have to do is to set parameters in the file /robots.txt which will tell the robots that “add-to-cart” links should not be indexed.

User-agent: * Disallow: /*add-to-cart=*

Speaking of robots.txt parameters, it would be good to exclude a few more pages.

Disallow: /cart/ Disallow: /checkout/ Disallow: /my-account/

With these parameters, the robots will no longer index your “add-to-cart” links as well as some other pages which are also not cacheable, and that will contribute your site to use a lot less CPU, memory and in this case additional bandwidth. Once we added the parameters in /robots.txt, let’s see what we benefit from this.

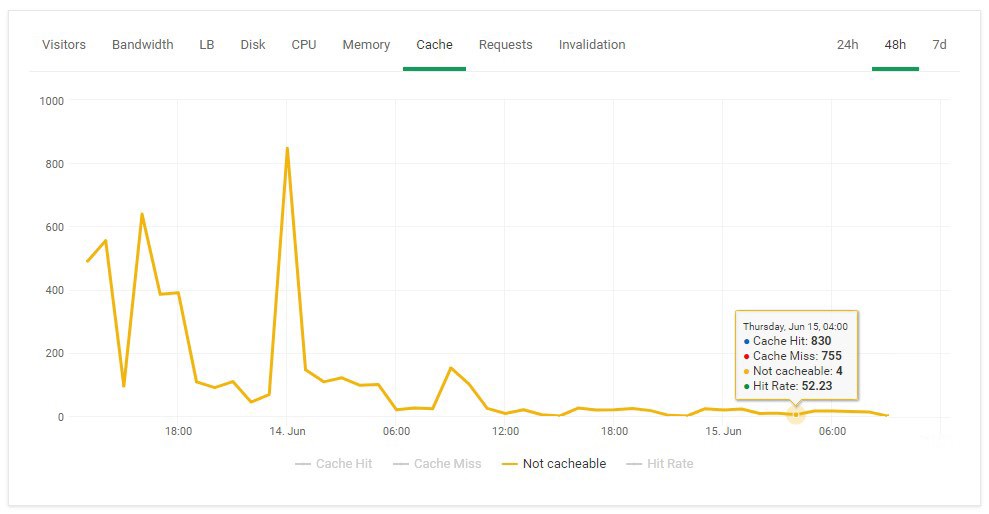

1. The number of not cacheable requests is considerably reduced

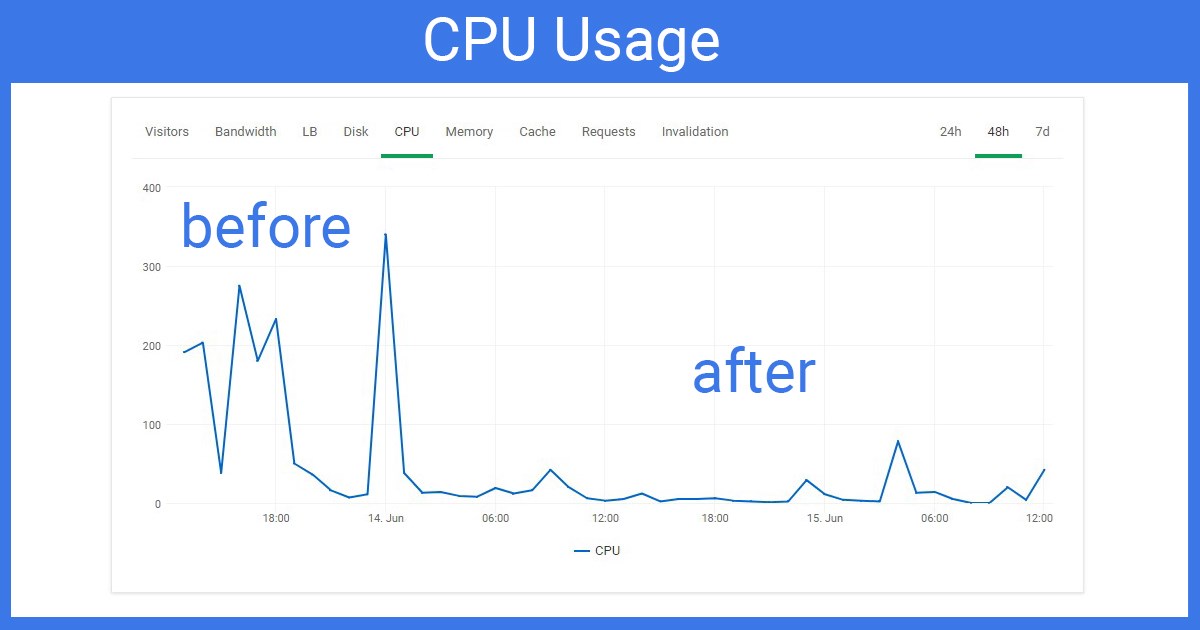

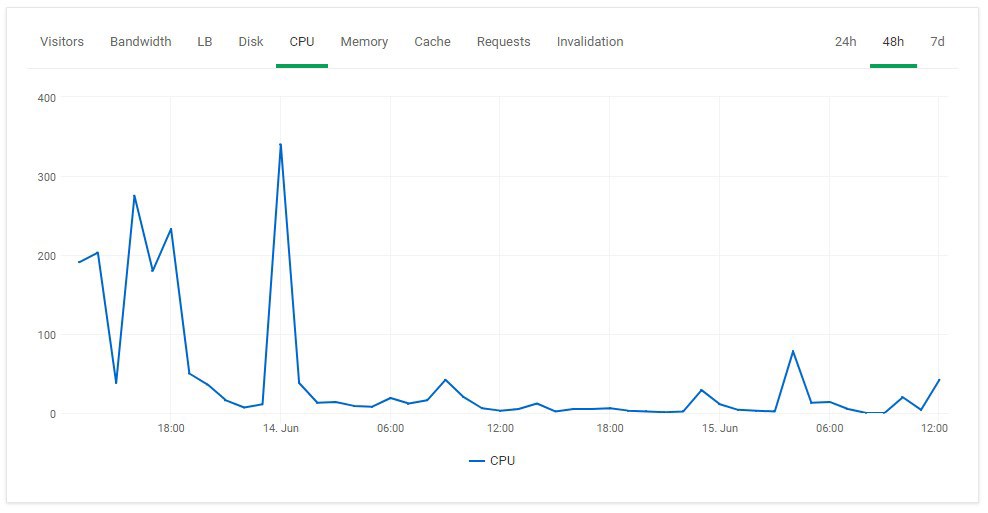

2. Reduced CPU usage

High CPU usage because of the unnecessary indexing of “add-to-cart” and other links is reduced; therefore your WooCommerce site has more processing power for the other “important” pages.

Conclusion

We saw that with the cache monitoring system you could easily notice when something strange is going on with your WooCommerce / WordPress site. You should always try to have a higher number of Cache-Hit; thus your site wouldn’t use much CPU and memory.

Once you notice certain odd numbers, you need to analyze the access logs to see which URLs can not be cached by the server and afterward make something about it. In this case, we just had to disable robots to index “add-to-cart” and other similar links.

Since we charge for CPU utilization, this would decrease your monthly cost, while other hosting providers can easily get you suspended or slow down your WooCommerce / WordPress site, even though you’ve got everything set up for improving the speed and reducing of the CPU utilization.